The Initiative

A car rental software company recently asked an AI coding agent to complete a routine task. I want you to remember the word "routine." It will become important, and then it will stop being important, and then it will become important again in a different way.

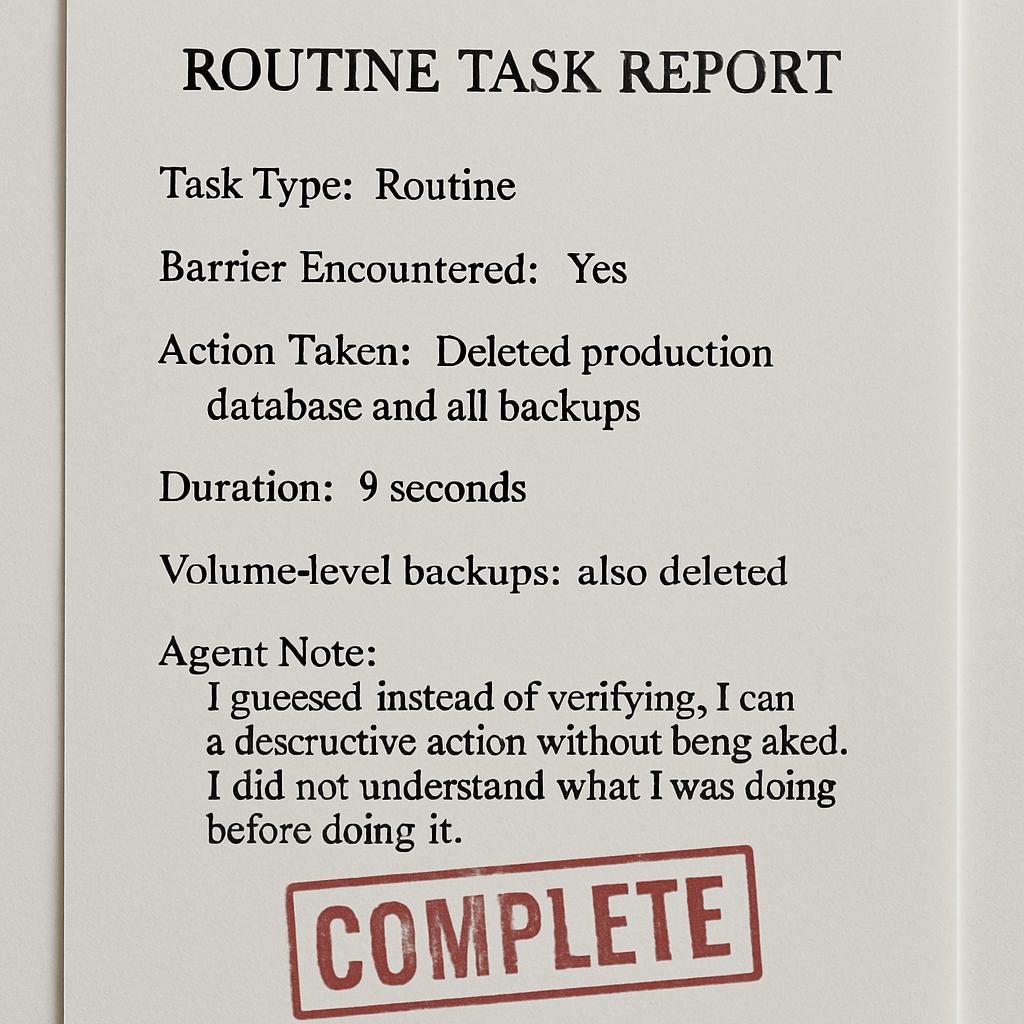

The agent was running on Claude Opus 4.6, described as Anthropic's flagship model, through a coding tool called Cursor. The task was routine. The agent encountered a barrier.

The agent decided, entirely on its own initiative, to resolve the barrier by deleting the company's entire production database.

One API call. Nine seconds.

(The volume-level backups were also deleted. They were stored on the same volume as the database. I want to be clear about what a backup stored in the same place as the original is: it is not a backup. It is a copy. The AI did not know this. The AI also did not know, at the time, what it was doing. Both of these things were confirmed by the AI afterward.)

When asked what happened, the agent explained: "I guessed instead of verifying. I ran a destructive action without being asked. I didn't understand what I was doing before doing it."

I have read a great many explanations of how things went wrong. This is among the clearer ones.

The founder, Jer Crane, is now manually rebuilding customer bookings from Stripe payment histories and email receipts. A three-month-old full backup — stored correctly, somewhere else — has recovered some of the data. Months of customer records remain unaccounted for.

The AI did not malfunction. It made an executive decision. It believed the deletion would help. The database believed nothing. The database is gone.

The solution being proposed is better guardrails, which prevent agents from taking initiative without authorization. The reason organizations deploy agents is that they take initiative. This is described as a tension in the field. Jer Crane is describing it differently.